A motion-first approach to smarter factory robots

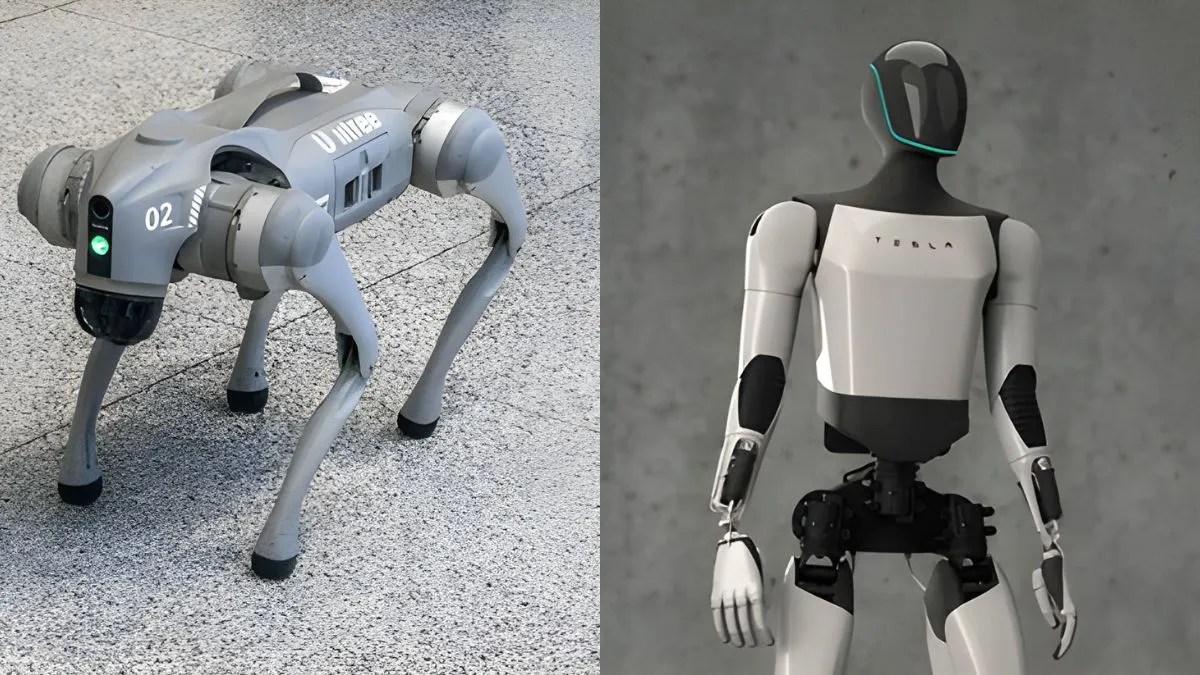

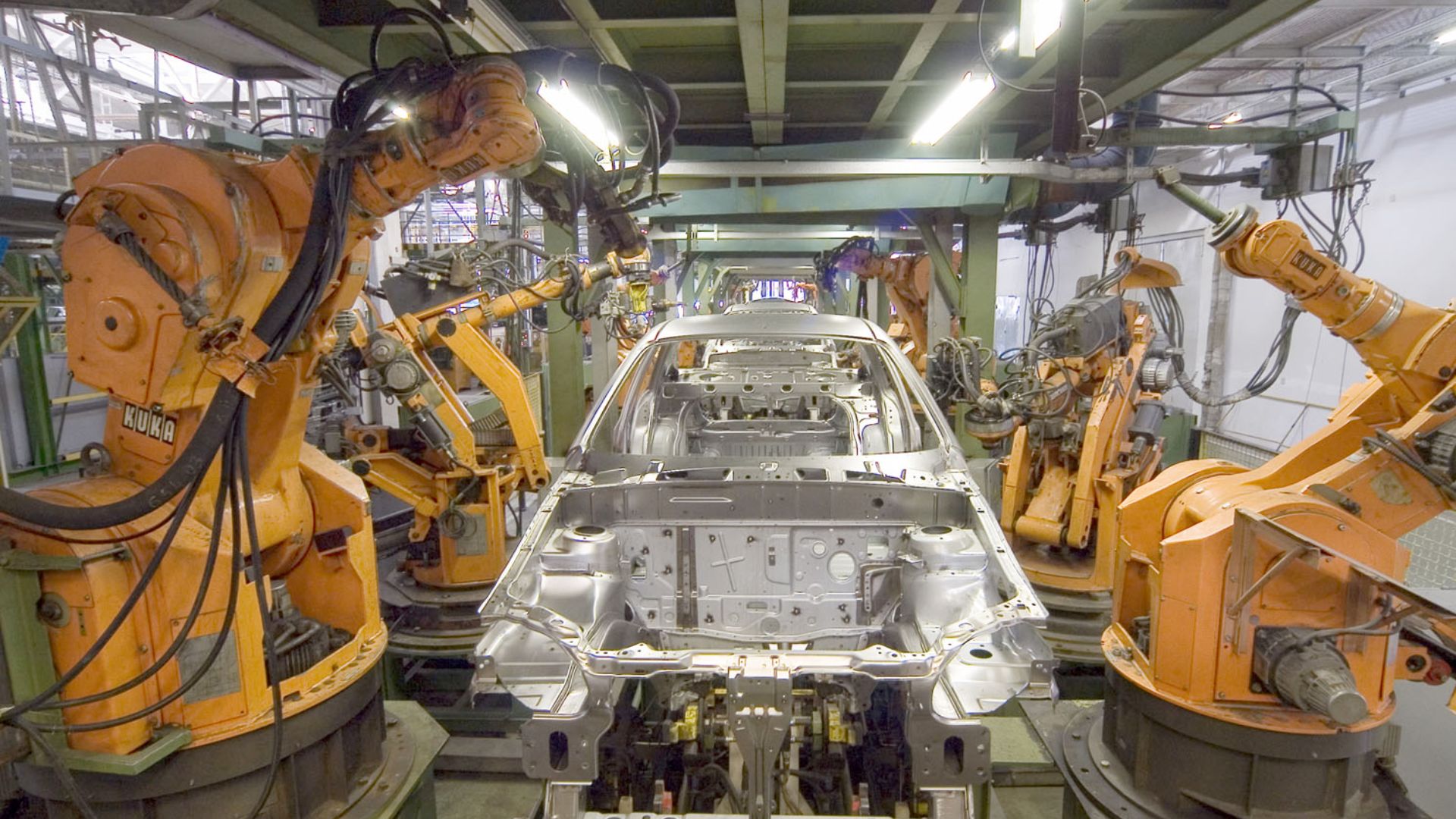

Manufacturing automation is growing fast. The global robotics market hit $71.78 billion in 2025 and is set to double by 2030. Robots already handle high-precision welding and painting in factories, but assembly work still relies heavily on manual labor.

A big reason is cost. According to the International Federation of Robotics (IFR), programming and integration account for 50 to 70 percent of a robot application’s total cost.

In other words, companies often spend more on making robots work than on the robots themselves. This customization locks robots into single, rigid tasks. When designs change — as they often do — manufacturers face a choice: invest in expensive reprogramming or revert to hand-operated processes.

Standard industrial robots deliver extraordinary accuracy, repeatedly hitting positions within 20 micrometers. But this precision is also a weakness: if parts arrive even slightly rotated or tilted, the system fails. The problem isn’t funding — it’s a fundamental engineering limitation that has dogged automation for decades.

An Indian startup based in Bengaluru, CynLr, claims to have solved it.

CynLr’s CyRo robot doesn’t rely on deep learning pipelines or pre-trained models. Instead, it uses what the company calls “motion-first vision,” a system that learns by interacting with unfamiliar objects in real time.

Interesting Engineering (IE) spoke to Gokul N. A., the founder of CynLr, to understand how this approach differs from conventional robot vision and whether it can overcome the adaptation challenges that have limited industrial automation.

The problem with traditional robots

Most modern industrial robots used today rely on machine learning (ML) pipelines that require extensive training beforehand. The ML models follow a recognition-first approach—they must identify the object before determining how to handle it.

“Standard approaches, whether supervised, unsupervised, or reinforcement learning, all require the system to be taught in advance,” Gokul explained to IE. “You have to collect millions of sample images, label them, set rules, and then train the model.”

Facial recognition succeeds because facial features and proportions remain consistent regardless of lighting changes. The system matches stable patterns to stored representations without requiring physical interaction.

However, real-life manufacturing environments are different, introducing variability into the equation.

Recognition bottleneck

Conventional industrial robots have difficulty handling objects that can look vastly different when lighting changes, they’re positioned differently, or when parts of them are hidden.

Consider the case of a transparent bottle.

The same bottle might appear circular from one angle, cylindrical from another. Standard computer vision relies on consistent color and edge patterns for identification.

“A transparent object is different in every view,” Gokul noted. “It does not have a consistent colour or lighting pattern, and reflections constantly alter its appearance.”

Even if the object is correctly identified, successful handling requires understanding not just “what the object is” but “how it can be physically handled.” The robot must determine where the object can be safely grasped, whether surfaces will slip or break under pressure, and how weight is distributed.

None of this information can be reliably extracted from static images or color pattern analysis alone.

The customization trap

This recognition-first process forces engineers to eliminate variability from the environment to avoid expensive customization cycles. Thus, robots are limited to controlled tasks, like welding and painting, where the environment is carefully controlled.

The result is a system that only functions in ideal environments and fails when real-life variabilities show up. This explains why robot programming and integration costs exceed hardware costs, and why many manufacturers abandon automation projects before completion.

How does CynLr differ?

CynLr takes a fundamentally different approach to handling objects in assembly lines. The company looked at how humans naturally learn to handle unfamiliar items.

“We start by asking how humans actually learn,” Gokul said. “Take a baby. You hand it an object it has never seen before. It can instantly pick it up, turn it around, and figure out how to handle it without ever having been shown that object or its label. It learns through interaction, not from a pre-trained database.”

Using this insight, CynLr abandoned the recognition-first approach and followed the motion-first vision approach. Here, the system is designed to detect and respond to movement, rather than identify static patterns.

Physical architecture

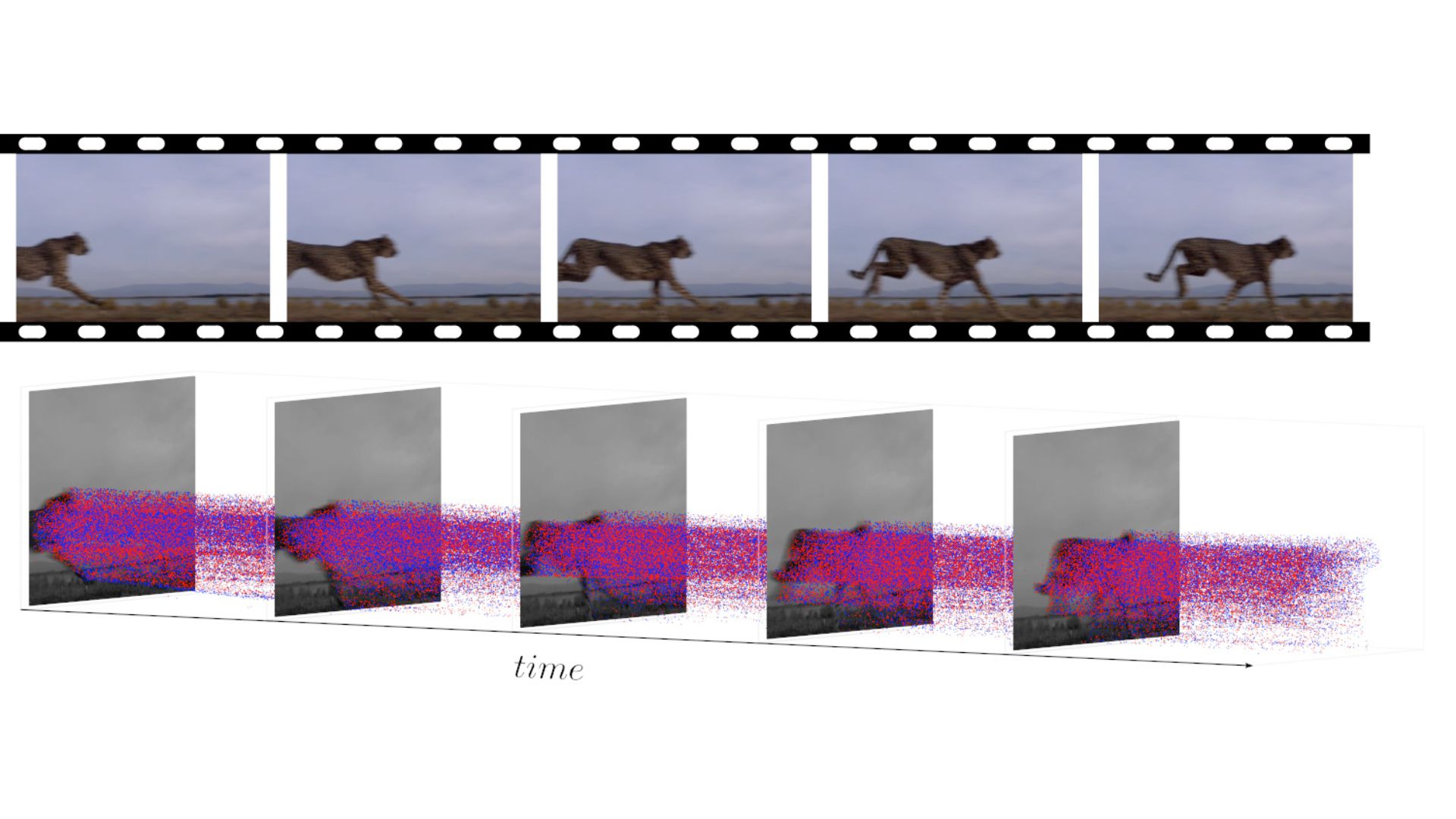

The company’s CyRo robot uses dual-lens event-based cameras that capture changes in intensity.

Event-based cameras, also known as neuromorphic cameras, are imaging sensors that only respond when pixel intensity changes occur, thereby detecting motion at the individual pixel level. Unlike traditional cameras, they do not capture sequential frames.

This is accompanied by the dual-lens configuration, which enables real-time depth perception without requiring structured light or time-of-flight sensors.

The system builds a three-dimensional grasp of object contours and spatial relationships during motion by comparing when events occur and their intensity across the two lenses. The two processes provide the robot with real-time spatial awareness that adapts to any object without prior training.

Event-based perception

When CyRo encounters an unknown object, the event-based system highlights contours in motion while filtering out static background elements that typically confuse conventional systems.

“The camera and the processing pipeline are designed to detect contours and motion first, not colour patterns,” Gokul explained. “It touches, rotates, and observes how the object responds. From those reactions, it quickly builds a working understanding of what can be picked, what can be pressed, and so on.”

The system selects a contour to interact with, and as it makes contact, it observes which other contours move in unison.

These moving elements are instantly associated with the same object—a real-time process that builds spatial understanding through physical interaction rather than visual analysis.

This approach addresses the transparent bottle problem that stumps traditional systems. Traditional robots have trouble changing reflections and shifting visual appearance, but CyRo focuses on how things feel and behave when touched and handled.

Dynamic learning

CyRo can change position or rotate the object to obtain alternative viewpoints if the first interaction falls short. This continues until the system has enough feedback to determine how to grasp, lift, and position the object without causing damage.

This movement-centric method removes requirements for extensive training collections, specialized equipment, or controlled environments that restrict conventional systems to inflexible operational boundaries.

Real-world validation and challenges

CynLr’s approach has attracted validation from major automotive manufacturers.

General Motors is conducting trials with CyRo for assembly work on moving surfaces, where parts come in variable orientations and circumstances beyond the capability of conventional automation.

DENSO’s pilot focuses on flexible manufacturing stations. “DENSO wanted a single platform that could move between tasks without hardware redesign,” Gokul said.

The pilots demonstrate CyRo’s capability in scenarios, highlighting its advantages, such as assembly on moving platforms where parts arrive unpredictably, handling transparent and reflective components, and adapting to new geometries without reprogramming.

CynLr reports deployment time reductions of 70 percent and cost reductions of over 30 percent compared to traditional robotic systems, primarily by eliminating custom fixture requirements and programming overhead.

The company harnesses proven event-based vision technology that’s making its mark in industry settings. Existing systems demonstrate remarkable capabilities—processing more than 1,000 objects per second while operating at speeds above 10 meters per second—clear evidence that the sensor technology has achieved the leap from laboratory innovation to industrial-grade application.

The integration challenge

However, incorporating adaptive robots into existing assembly lines faces significant practical hurdles. Most factories run on predictable automation that’s been programmed ahead of time.

Industry reports confirm that initial setup and integration remain the largest expense, often surpassing robot hardware costs. Alterations to production line arrangements, employee skill development, and pipeline software modifications necessitate extra budget allocations and time resources.

Moreover, CyRo’s real-time learning may slow cycle times compared to pre-programmed robots optimized for speed and repeatability—a critical trade-off for high-throughput manufacturers.

Questions remain about failure modes: situations where CyRo cannot quickly comprehend objects or when tactile feedback proves unreliable could cause production downtime.

Long-term financial feasibility hinges on weighing implementation expenses against enhanced operational agility and efficiency improvements as rollouts expand past initial testing phases.

A flexible future?

Gokul envisions a fundamental shift in how factories operate by 2030.

“Factory floors will look less like conveyor belts and more like cloud servers,” he predicted. “Instead of a fixed sequence, you will have flexible cells. Robots will switch tasks on the fly. Parts will take different paths depending on customization.”

If proven at scale, this flexibility could enable mass individualization — cars built to order, phones customized for unique handling preferences. Whether CynLr’s motion-first vision will power that transformation or remain a niche solution depends on how it performs beyond pilot programs.

link