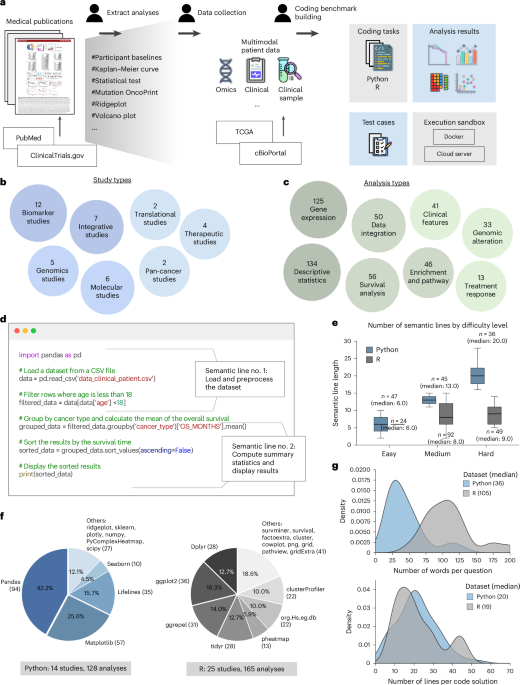

Making large language models reliable data science programming copilots for biomedical research

Radenkovic, D., Keogh, S. B. & Maruthappu, M. Data science in modern evidence-based medicine. J. R. Soc. Med. 112, 493–494 (2019).

Google Scholar

Ellis, L. D. To meet future needs, health care leaders must look at the data (science). Harvard T.H. Chan School of Public Health (accessed 16 September 2024).

Meyer, M. A. Healthcare data scientist qualifications, skills, and job focus: a content analysis of job postings. J. Am. Med. Inf. Assoc. 26, 383–391 (2019).

Google Scholar

Chen, M. et al. Evaluating large language models trained on code. Preprint at (2021).

Li, Y. et al. Competition-level code generation with alphacode. Science 378, 1092–1097 (2022).

Google Scholar

Luo, Z. et al. Wizardcoder: empowering code large language models with evol-instruct. In The Twelfth International Conference on Learning Representations 1–21 (OpenReview, 2023).

Lozhkov, A. et al. Starcoder 2 and the stack v2: the next generation. Preprint at (2024).

Zhang, F. et al. RepoCoder: repository-level code completion through iterative retrieval and generation. The 2023 Conference on Empirical Methods in Natural Language Processing 2471–2484 (Association for Computational Linguistics, 2023).

Parvez, M. R., Ahmad, W., Chakraborty, S., Ray, B. & Chang, K.-W. Retrieval augmented code generation and summarization. In Findings of the Association for Computational Linguistics: EMNLP 2021 2719–2734 (Association for Computational Linguistics, 2021).

Wang, Z. Z. et al. CodeRAG-Bench: can retrieval augment code generation? In Findings of the Association for Computational Linguistics: NAACL 2025 3199–3214 (Association for Computational Linguistics, 2025).

Chen, X., Lin, M., Schärli, N. & Zhou, D. Teaching large language models to self-debug. In The Twelfth International Conference on Learning Representations 1–80 (OpenReview, 2024).

Austin, J. et al. Program synthesis with large language models. Preprint at (2021).

Hendrycks, D. et al. Measuring coding challenge competence with apps. In Thirty-Fifth Conference on Neural Information Processing Systems Datasets and Benchmarks Track 1–11 (OpenReview, 2021).

Liu, J., Xia, C. S., Wang, Y. & Zhang, L. Is your code generated by ChatGPT really correct? Rigorous evaluation of large language models for code generation. Adv. Neural Inf. Process. Syst. 36, 21558–21575 (2023).

Jimenez, C. E. et al. SWE-bench: can language models resolve real-world GitHub issues? In The Twelfth International Conference on Learning Representations 1–51 (OpenReview, 2024).

Huang, J. et al. Execution-based evaluation for data science code generation models. In Proc. Fourth Workshop on Data Science with Human-in-the-Loop (Language Advances) 28–36 (Association for Computational Linguistics, 2022).

Lai, Y. et al. DS-1000: a natural and reliable benchmark for data science code generation. In International Conference on Machine Learning 18319–18345 (PMLR, 2023).

Tayebi Arasteh, S. et al. Large language models streamline automated machine learning for clinical studies. Nat. Commun. 15, 1603 (2024).

Google Scholar

Tang, X. et al. Biocoder: a benchmark for bioinformatics code generation with large language models. Bioinformatics 40, i266–i276 (2024).

Google Scholar

Majumder, B. P. et al. DiscoveryBench: towards data-driven discovery with large language models. In The Thirteenth International Conference on Learning Representations 1–34 (OpenReview, 2025).

Wang, Z., Danek, B. & Sun, J. BioDSA-1K: benchmarking data science agents for biomedical research. Preprint at (2025).

TrialMind Data Science Assistant. Keiji AI. (2025).

cBioPortal for cancer genomics. cBioPortal (accessed 17 September 2024).

Hello GPT-4o. OpenAI (accessed 17 September 2024).

GPT-4o mini: advancing cost-efficient intelligence. OpenAI (accessed 17 September 2024).

Claude 3.5 Sonnet. Anthropic (accessed 17 September 2024).

Introducing the next generation of Claude. Anthropic (accessed 17 September 2024).

Reid, M. et al. Gemini 1.5: unlocking multimodal understanding across millions of tokens of context. Preprint at (2024).

OpenAI o3-mini: pushing the frontier of cost-effective reasoning. OpenAI (accessed 6 June 2025).

Grattafiori, A. et al. The Llama 3 herd of models. Preprint at (2024).

Guo, D. et al. Deepseek-R1 incentivizes reasoning capability in LLMs via reinforcement learning. Nature 645, 633–638 (2025).

Google Scholar

Rozière, B. et al. Code Llama: open foundation models for code. Preprint at (2024).

Hui, B. et al. Qwen2.5-coder technical report. Preprint at (2024).

Wei, J. et al. Chain-of-thought prompting elicits reasoning in large language models. Adv. Neural Inf. Process. Syst. 35, 24824–24837 (2022).

Brown, T. B. et al. Language models are few-shot learners. In Proc. 34th International Conference on Neural Information Processing Systems, 1877-1901. (Curran Associates, 2020).

Khattab, O. et al. DSPy: compiling declarative language model calls into state-of-the-art pipelines. In The Twelfth International Conference on Learning Representations 1–31 (OpenReview, 2024).

Lewis, P. et al. Retrieval-augmented generation for knowledge-intensive NLP tasks. Adv. Neural Inf. Process. Syst. 33, 9459–9474 (2020).

Yao, S. et al. ReAct: synergizing reasoning and acting in language models. In International Conference on Learning Representations 1–33 (OpenReview, 2023).

Zehir, A. et al. Mutational landscape of metastatic cancer revealed from prospective clinical sequencing of 10,000 patients. Nat. Med. 23, 703–713 (2017).

Google Scholar

Welch, J. S. et al. Tp53 and decitabine in acute myeloid leukemia and myelodysplastic syndromes. N. Engl. J. Med. 375, 2023–2036 (2016).

Google Scholar

Mostavi, M., Chiu, Y.-C., Huang, Y. & Chen, Y. Convolutional neural network models for cancer type prediction based on gene expression. BMC Med. Genomics 13, 44 (2020).

Google Scholar

Yen, P.-Y., Wantland, D. & Bakken, S. Development of a customizable health it usability evaluation scale. In AMIA Annual Symposium Proceedings Vol. 2010, 917 (American Medical Informatics Association, 2010).

Wang, Z. et al. Accelerating clinical evidence synthesis with large language models. npj Digit. Med. 8, 509–523 (2025).

Google Scholar

Lin, J., Xu, H., Wang, Z., Wang, S. & Sun, J. Panacea: a foundation model for clinical trial search, summarization, design, and recruitment. Preprint at (2024).

Jin, Q. et al. Matching patients to clinical trials with large language models. Nat. Commun. 15, 9074 (2023).

Wang, X. et al. OpenHands: An Open Platform for AI Software Developers as Generalist Agents. In The Thirteenth International Conference on Learning Representations 1–8 (OpenReview, 2025).

Majumder, B. P. et al. Position: data-driven discovery with large generative models. In Proc. 41st International Conference on Machine Learning 34350–34382 (JMLR, 2024).

Grossman, R. L. et al. Toward a shared vision for cancer genomic data. N. Engl. J. Med. 375, 1109–1112 (2016).

Google Scholar

Jupyter. Jupyter (accessed 23 September 2024).

Van Veen, D. et al. Adapted large language models can outperform medical experts in clinical text summarization. Nat. Med. 30, 1134–1142 (2024).

Google Scholar

Nie, F., Chen, M., Zhang, Z. & Cheng, X. Improving few-shot performance of language models via nearest neighbor calibration. Preprint at (2022).

New embedding models and API updates. OpenAI (accessed 23 September 2024).

Shin, T., Razeghi, Y., Logan IV, R. L., Wallace, E. & Singh, S. AutoPrompt: eliciting knowledge from language models with automatically generated prompts. In Proc. 2020 Conference on Empirical Methods in Natural Language Processing 4222–4235 (Association for Computational Linguistics, 2020).

Vertex AI search. Google (accessed 23 September 2024).

Madaan, A. et al. Self-refine: iterative refinement with self-feedback. Adv. Neural Inf. Process. Syst. 36, 46534–46594 (2023).

link